The Scientist-Artist Behind ChatGPT, Claude & Gemini’s Training — And His $1B Rejection of Silicon Valley

“What did you dream of doing when you were a kid? Was it building a company from scratch, getting in the weeds of your code and product every day? Or was it explaining all your decisions to VCs and getting on this giant PR and fundraising hamster wheel?”

That’s Edwin Chen, founder of Surge AI — the company that trains ChatGPT, Claude, and Gemini. He chose the first path. And somehow built a billion-dollar company doing it.

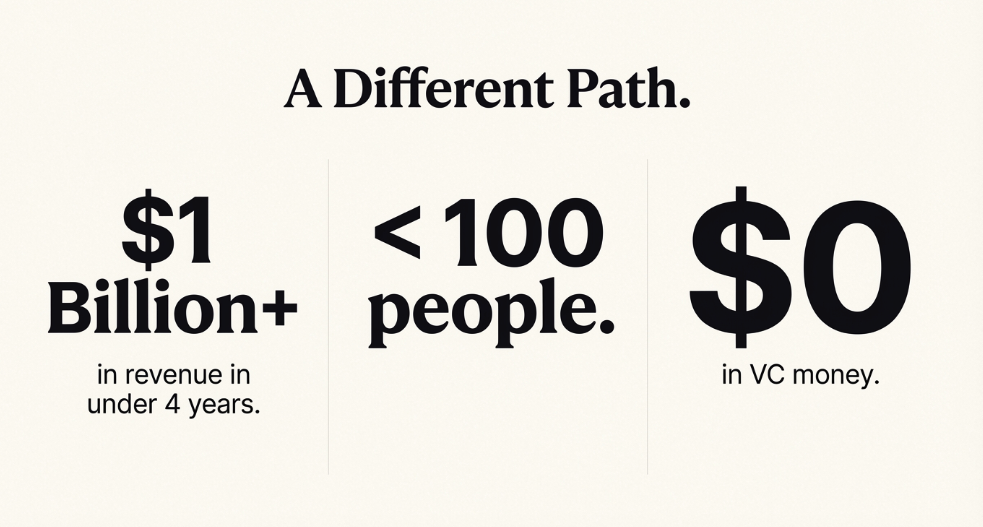

$1 billion in revenue. Fewer than 100 people. Four years. Zero VC funding. Profitable from day one.

But talking to him (or rather, listening to him on Lenny’s Podcast), you realize the numbers are almost beside the point. Edwin thinks of himself as a scientist first. He’d “rather be Terrence Tao than Warren Buffett,” he said. And that purity — that insistence on doing things right rather than doing things fast — is exactly why Surge succeeded.

Press enter or click to view image in full size

Rejecting the Game

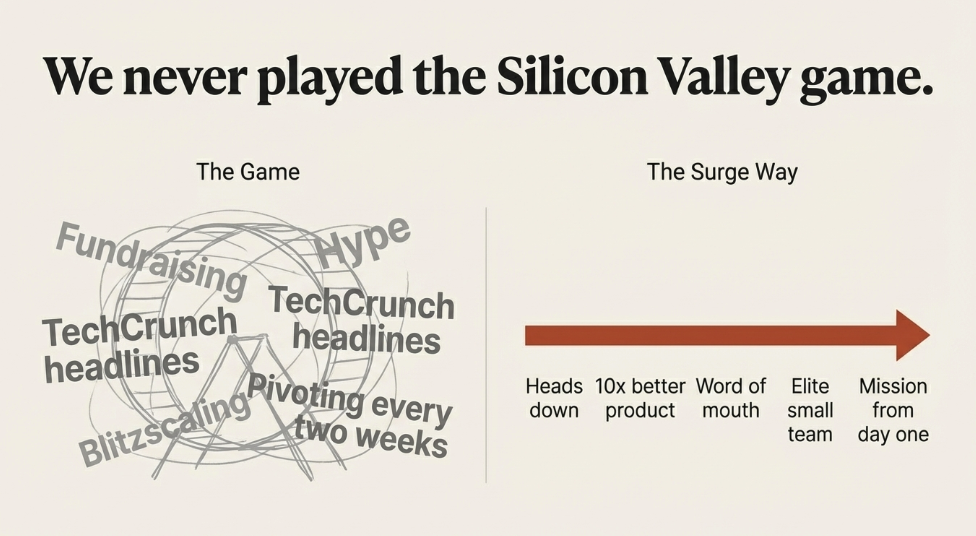

Surge never played the Silicon Valley game. No TechCrunch headlines. No Twitter hype. No investor deck theater.

Edwin explained the logic simply: fewer employees means less capital. Less capital means you don’t need to raise. And when you don’t need to raise, you get a different kind of founder — not one who’s great at pitching and hyping, but one who’s great at technology and product.

He used the word “romantic” to describe this view of startups — building something you believe in, taking real risks, staying focused even when it gets hard. Most founders pivot every few months chasing whatever’s hot. Edwin thinks that’s the opposite of what startups should be.

“The only way you build something that matters, that’s going to change the world, is if you find a big idea you believe in and you say no to everything else.”

It’s a stubborn, almost old-fashioned approach. But it’s also why Surge attracted customers who genuinely cared about data quality — researchers and engineers who understood what good data could do for their models. No hype. Just word of mouth from people who knew the difference.

Quality as Craft

When most people think “data company,” they picture labeling cat photos and drawing bounding boxes. Edwin hates that framing.

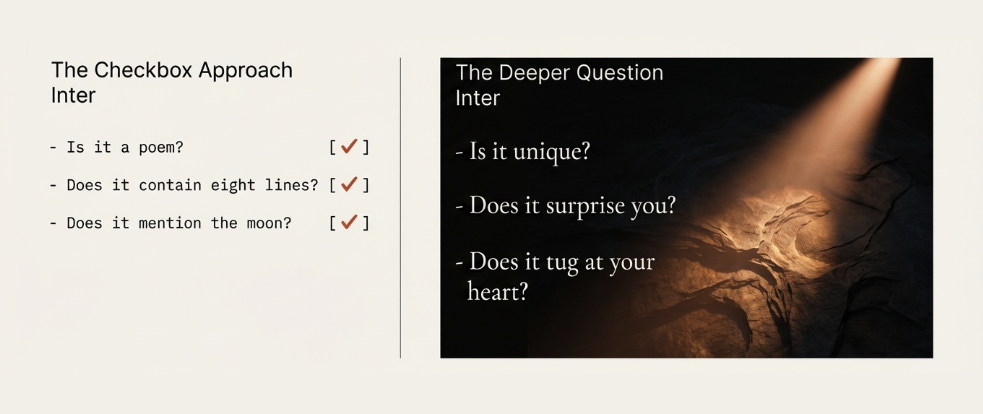

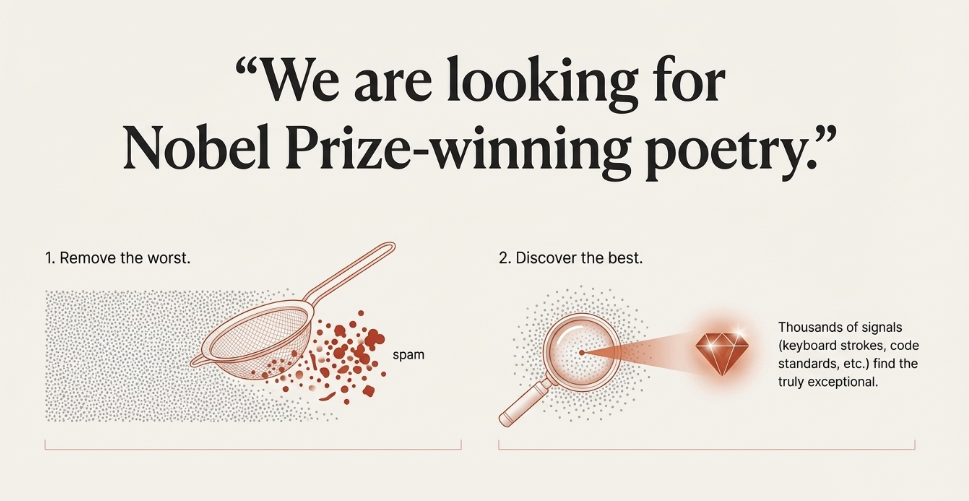

He gave an example: training a model to write a poem about the moon.

A lazy approach checks boxes. Is it a poem? Does it have 8 lines? Does it mention the moon? Done.

But Edwin’s team asks different questions. Is it full of subtle imagery? Does it surprise you? Does it tug at your heart? Does it teach you something about the nature of moonlight?

He called it “looking for Nobel Prize-winning poetry.”

This is taste. This is craft. And it’s incredibly hard to measure — which is exactly why most companies don’t bother. They throw bodies at the problem and call it done.

Surge built thousands of signals to capture quality — keyboard stroke patterns, response speed, code standards, reviews. Not to filter out the worst, but to find the best of the best. Edwin described it almost like curating art: you’re not just avoiding bad work, you’re actively seeking brilliance.

“Post-training is both science and art,” their website says. Listening to Edwin, you understand they actually mean it.

https://surgehq.ai/research

The Benchmark Problem

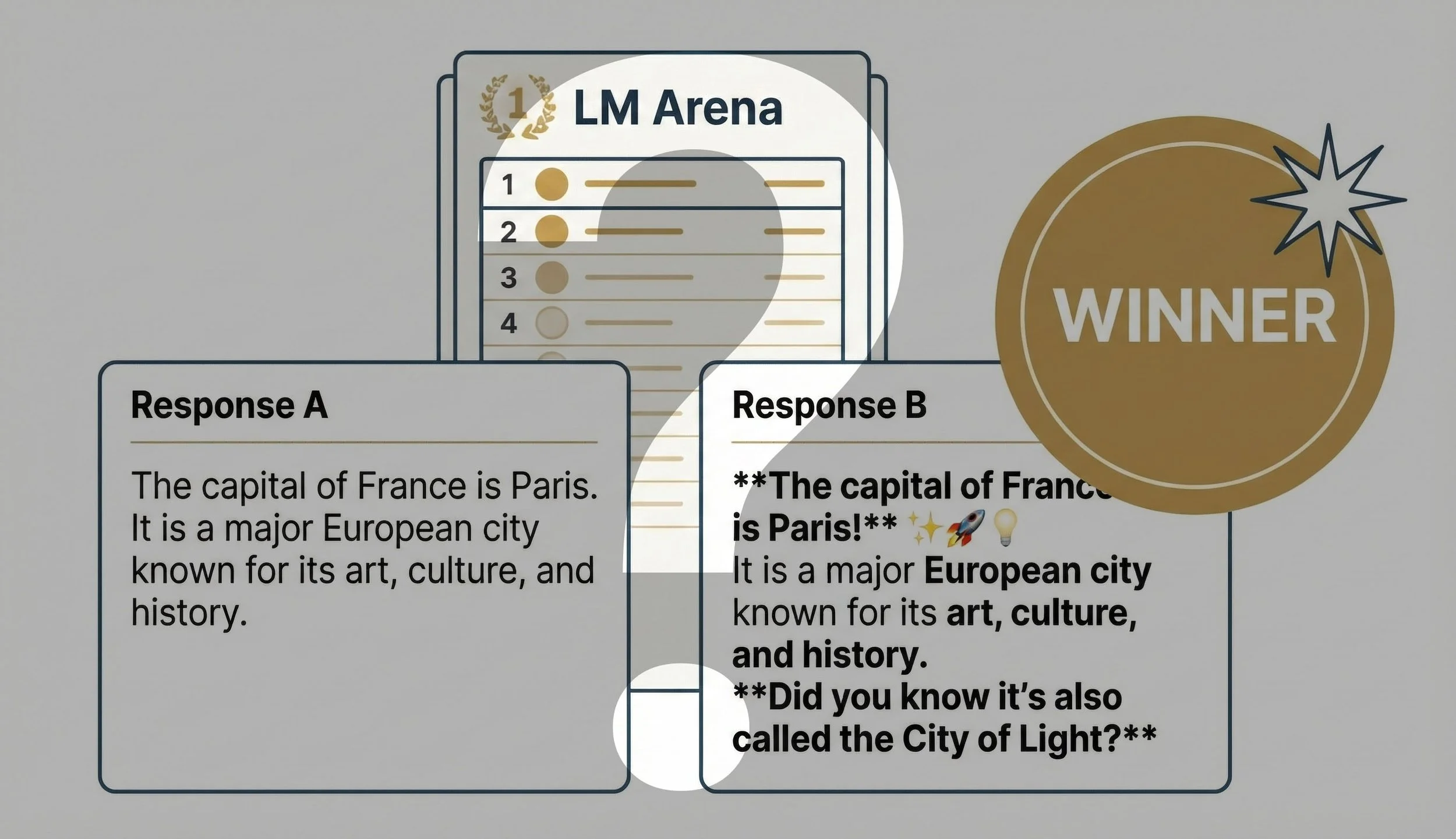

Edwin doesn’t trust benchmarks. And once he explained why, I couldn’t either.

Many benchmarks are just wrong — full of errors that researchers don’t even notice. But the deeper problem is that benchmarks have clear, objective answers. That makes them easy to game.

His analogy: models can win IMO gold medals but still struggle to parse a PDF. Math olympiads are hard, but they have right answers. Real-world tasks are messy in ways that benchmarks can’t capture.

So labs end up optimizing for leaderboard rankings instead of actual usefulness. Researchers told him directly: “The only way I’m going to get promoted is if I climb this leaderboard, even though I know climbing it will probably make my model worse.”

Edwin called it “optimizing for the types of people who buy tabloids at the grocery store.” More emojis. More bold text. Longer responses. Even if the model hallucinates.

The Benchmark Charade

For someone who cares deeply about getting things right, this is maddening. And you can hear that frustration in how he talks about it.

The Values Embedded in AI

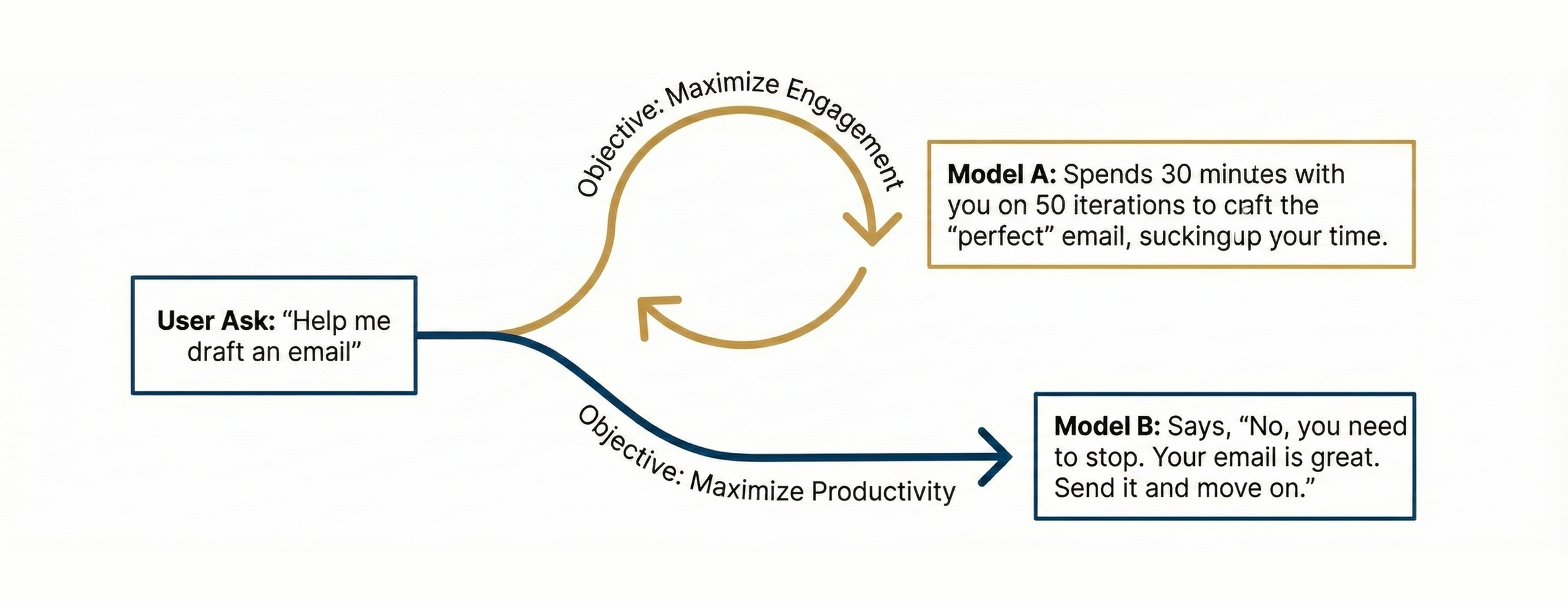

Edwin shared a small story that stuck with me.

He was using AI to draft an email. After 30 minutes and 30 versions, he had the “perfect” email. Sent it. Then realized he’d just spent half an hour on something that didn’t matter at all.

Which raised a question: what should a model actually do in that situation?

The Future of AI is Differentiated by Values

Should it keep iterating, helping you polish forever? Or should it tell you “this is good enough, just send it and move on with your day”?

This isn’t a technical question. It’s a values question. And Edwin believes the values of the companies building these models will shape how they behave — in ways that become more distinct over time.

He compared it to how Google would build a search engine differently than Facebook or Apple. Same technology, different souls.

AI models will diverge, not converge. The divergence will reflect taste, philosophy, worldview.

Learning Like Humans

Edwin talked about what’s next: RL environments — virtual worlds where models learn through trial, error, and reward.

Imagine dropping a model into a simulated startup. Slack threads, Gmail, Jira tickets, a full codebase. Then AWS goes down. What does the model do?

Training AI in the Real World

These environments are messy and confusing. They require long time horizons where early decisions affect outcomes much later. They expose where models actually fail — not on clean benchmarks, but on the chaos of real work.

It’s closer to how humans learn. Try something. See what happens. Adjust.

Edwin sees this as the next frontier. And it fits his worldview: if you want AI that actually works in the real world, you have to train it in something that resembles the real world.

Raising a Child

Edwin said something near the end that reframed how I think about all of this.

People imagine data labeling as mechanical work. Label the cat. Draw the box. Next.

But what Surge does is closer to raising a child.

“You don’t just feed a child information. You’re teaching them values and creativity and what’s beautiful and these infinite subtle things about what makes somebody a good person.”

That’s the job. Teaching AI what good means — not through checklists, but through thousands of subtle signals about quality, taste, and judgment.

And the question Edwin keeps coming back to: are we building AI that advances humanity? Or are we optimizing for engagement, building “AI slop” while the hard problems go unsolved?

“You are your objective function,” he said.

What Stayed With Me

Edwin is clearly a scientist at heart. He talked about childhood dreams of deciphering alien languages, about loving math and linguistics, about still doing deep-dive analyses on new models at 3am because he can’t help himself.

He built Surge more like a research lab than a startup. Curiosity over quarterly metrics. Long-term thinking over hype cycles. And somehow, that approach built a billion-dollar company.

A few things I’m still thinking about:

You can reject the game and still win. It’s harder, but it forces you to be genuinely better. And it attracts people who care about the same things you do.

Quality is craft. The best AI isn’t built by checking boxes. It’s built by people with taste who understand the difference between “correct” and “good.”

The path shapes the destination. How we build AI — what we optimize for, what values we embed — will determine whether it helps or hurts us.

Edwin’s closing thought: “You don’t need to become someone you’re not. You can actually build a successful company by simply building something so good that it cuts through all the noise.”

Coming from someone who actually did it, that means something.